Case study: Cowrywise uses simple experiments to improve referral rates

The experiments gave them clear, reliable answers within a few short weeks, and indicated what strategies to pursue in the longer term

Challenge

Cowrywise, a micro-investment platform for underserved, digitally-native consumers in Nigeria, was steadily growing its customer base. However the growth team noticed that only a small percentage of customers were coming from referrals. They were puzzled because ratings and reviews from customers were extremely positive, indicating they might be naturally inclined to recommend it to friends and family.

The team suspected referral rates were low because the mechanism that enabled users to make referrals via the app was cumbersome. Cowrywise realised they needed to do something about this but weren’t sure about the right approach. Rather than guessing, they decided to run two experiments to test which method would give them more referrals from existing customers.

Action

Cowrywise came up with two hypotheses about how to increase rates:

- People need to be triggered to make referrals and will respond to one-off campaigns. Reminders, together with incentives, deployed at critical points in the customer timeline during which they are feeling positive about the brand, can help to drive referrals.

- People make referrals when it is easy and top-of-mind, so making UI adjustments to increase the prominence of the referral mechanism and enabling “one-click” execution can help to drive referrals.

They also came up with three thresholds of success to measure their hypotheses against so that analysis would be quick, easy, and protected from post-hoc rationalizations. These thresholds for success were:

- An increase in the average number of referral-related sign-ups per month, by 1,500. Goal was defined as 3,000 referrals a month, 100 a day.

- An increase in the number of users that become referrers after three months, to 8% (currently at X%).

- Conversion rates from sign-ups to savers should remain unchanged (between X% and Y%).

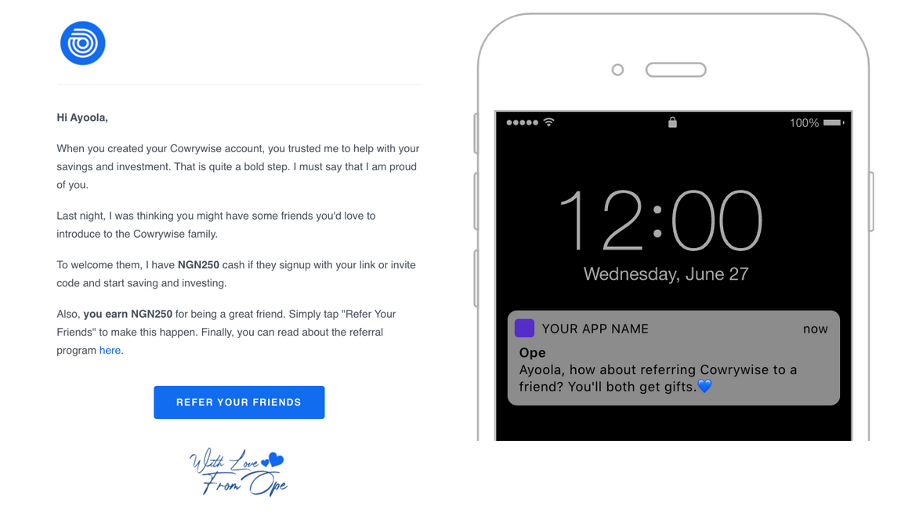

To test hypothesis 1, the team came up with an email campaign that reminded users to refer their friends to Cowrywise in a systematic way; after 3, 6, 9 and 12 months of use. They used Customer.io to send users an email containing a prompt to refer their friends. Further, those that didn’t respond to the email would receive a push notification the following week with a monetary incentive to refer a friend.

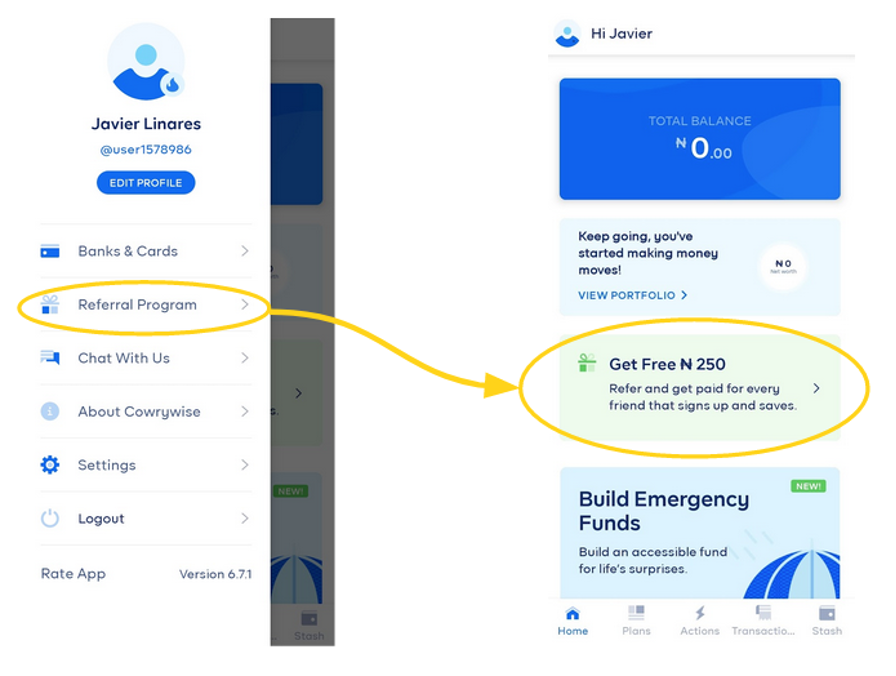

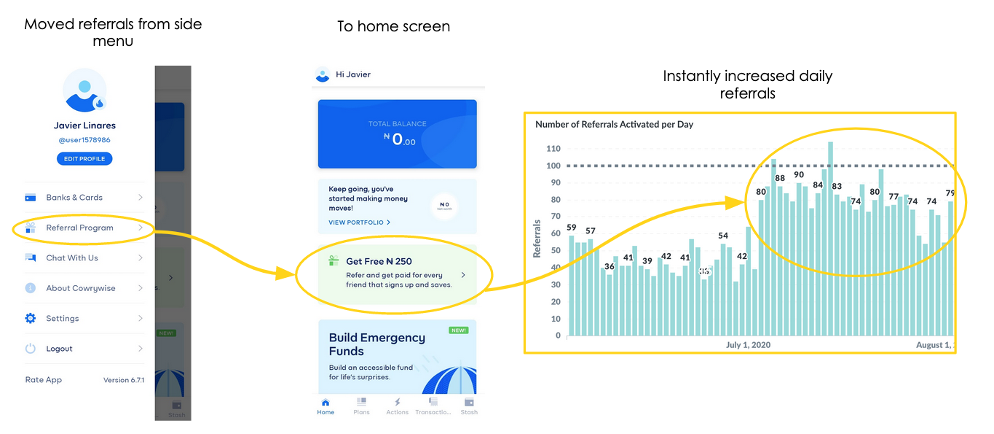

To test hypothesis 2, the team redesigned the home screen to make the referral prompt more attention-grabbing and to highlight the monetary incentive.

Result

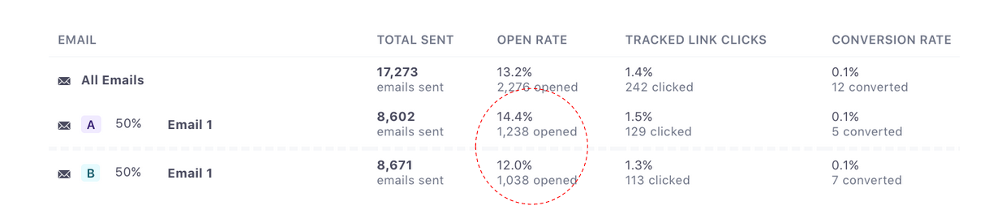

Experiment one produced disappointing results. Although delivery rates were high, email proved to be a questionable channel for calls-to-action; open rates were low at ~15%, and, even worse, click-through rates were only 1%. Even though the team tried two different email variations, neither met the thresholds for success.

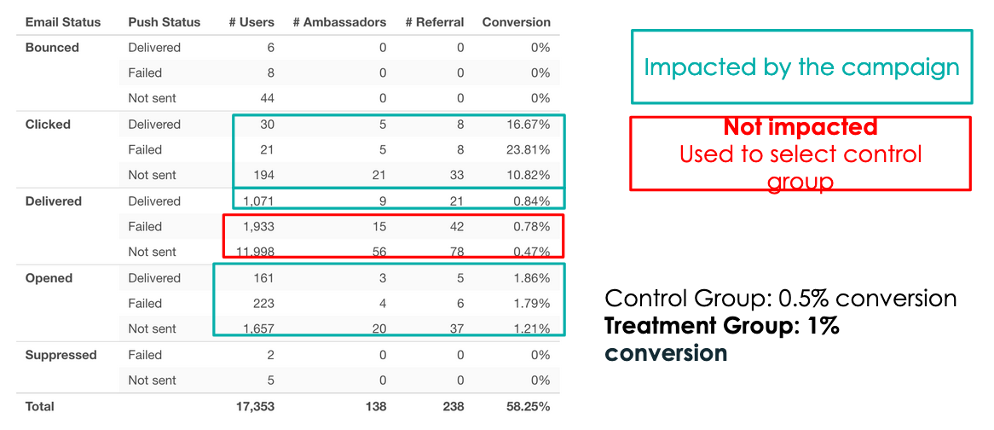

Overall, daily referrals increased slightly, but the team wasn’t sure it was because of the email. They were able to use failed email deliveries as a control group to conclude that the email did increase conversations, but not at rates that would enable them to consider the campaign a success.

In contrast, the results from the test for hypothesis 2 were clear cut. The team’s dashboard illustrated a clear “new equilibrium” demonstrating referral rates that were substantially higher than they were previously.

Although the experiment did not meet the thresholds for success, it confirmed that users make referrals when it is easy and top-of-mind. The team was able to take that insight and transform it into further UI adjustments to increase prominence of the referral mechanism and enable “one-click” execution. They continue to use experiments to consistently increase referrals, iterating on their strategy in each round.

Assessing Product-Market Fit

How do you know if you are ready to start investing in growth or if you need continue pushing toward product-market fit?